How to Track LSAT Score Progress

A real LSAT® score tracker goes beyond total scores. Track question type accuracy, difficulty patterns, and pacing to find where improvement is coming from.

You're taking practice tests. Your scores bounce around — 158, 162, 160, 165, 163. Are you improving?

The honest answer: you can't tell from those numbers alone. A total score compresses hours of reasoning across three scored sections (two Logical Reasoning, one Reading Comprehension), plus one unscored variable section, and dozens of question categories into a single number. It tells you roughly where you are. It doesn't tell you why, and it doesn't tell you what to do about it.

Your Total Score Is a Blunt Instrument

Two students can both score 162 for completely different reasons. One is crushing Logical Reasoning but bleeding points in Reading Comprehension. The other is the opposite. Their study plans should be completely different, but their score is identical.

In our experience, students who track question type accuracy tend to improve faster than those who only track total scores — the feedback loop is that much tighter.

If you're tracking progress by total score alone, you're flying blind. A real LSAT® score tracker goes beyond logging numbers — it shows you where your score improvement is actually coming from.

What to Track Instead of Your LSAT® Total Score

Section scores over time

Track your raw score for each scored section (two LR, one RC) across every practice test. This immediately reveals which sections are improving and which are stuck.

A student whose LR went from -8 to -4 over five tests but whose RC stayed at -7 the whole time knows exactly where to focus. Without section tracking, they'd just see a total that went up by four points and think "I'm improving" — without realizing half their study time is going toward a section that's already strong.

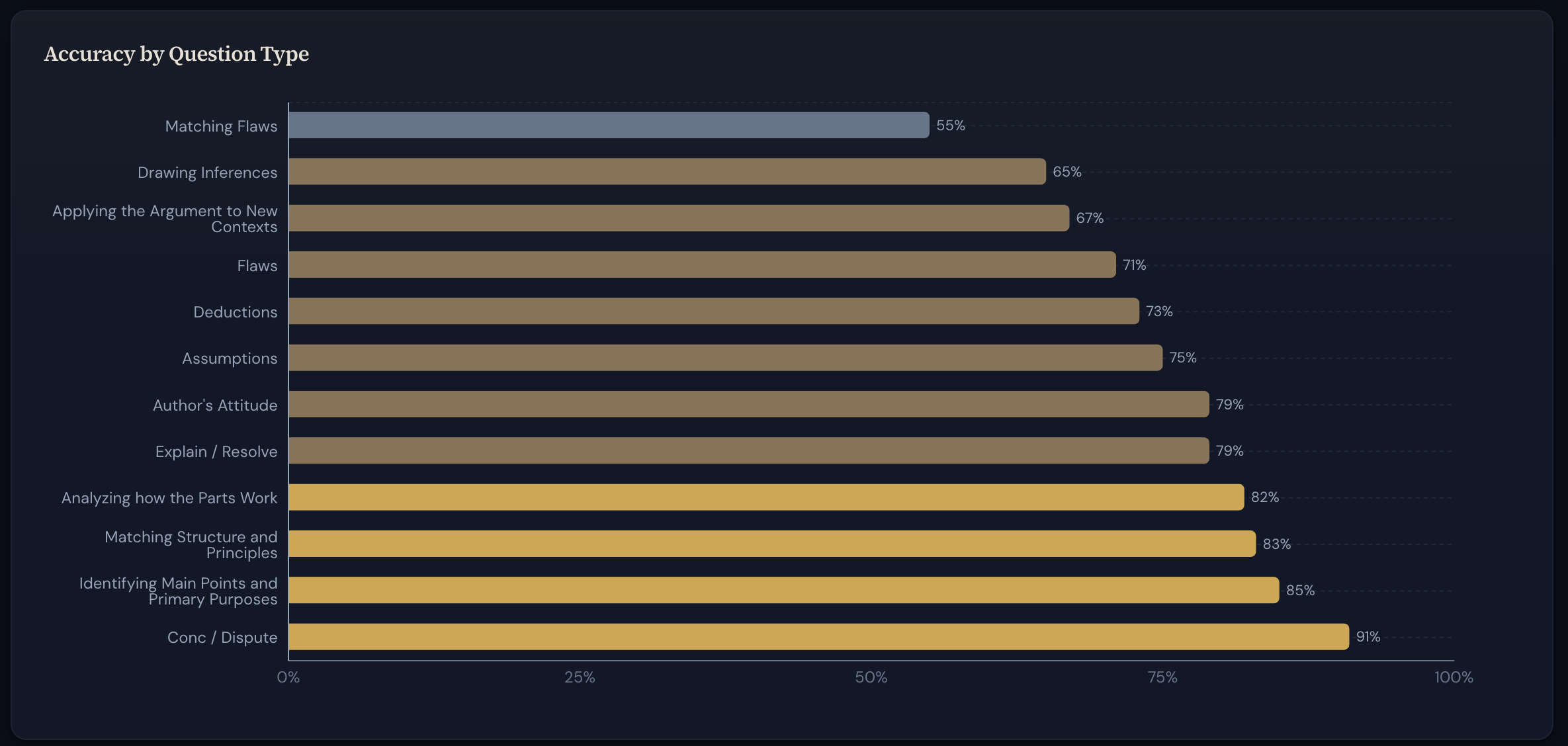

Question type accuracy — the most valuable metric you can track

This is the single most actionable number in your prep, and the one fewest people track. Your accuracy on each question type — Flaw, Necessary Assumption, Strengthen, and Weaken for LR; Main Point, Inference, Author's Attitude, and Passage Structure for RC — tells you which reasoning skills need work.

Maybe you're hitting 85% on Strengthen but only 50% on Necessary Assumption. That's a specific, actionable finding: you need to drill identifying unstated assumptions. Without question type tracking, that pattern is invisible.

One note on question type labels: different prep companies categorize LSAT® questions differently. The taxonomy framework you use — LSAC, PowerScore, 7Sage, Loophole, or Kaplan — matters less than tracking consistently within one of them. Pick one and stick with it so your accuracy trends are comparable across tests.

Accuracy by difficulty

LawHub provides difficulty ratings in its score reports (currently on a 1-to-5 scale, though the presentation may vary as LawHub updates). Track your accuracy at each level.

Missing 1- and 2-star questions is a pacing or carelessness problem — you have the skills but aren't deploying them consistently. Missing 3- and 4-star questions is expected, but tracking accuracy there over time shows whether your ceiling is rising. Missing 3-star questions at a high rate usually points to specific concept gaps that targeted drilling can fix.

Pacing patterns

If you're consistently missing the last five questions in a section, that's not a knowledge gap — it's time management. If your accuracy is consistently worse in your second scored section, that's a stamina issue requiring different training than a content gap. These patterns don't show up in your total score but they're often the easiest problems to fix. Record the question number you were on when time was called, and note whether you guessed on the final questions or ran out entirely. Over several tests, you'll see whether pacing is costing you more points than content gaps.

Real Patterns vs. Noise

LSAT® scores have natural variance. LSAC has historically reported a standard error of measurement of roughly 2.6 points (per their technical documentation), meaning the same person taking the same test twice might score 2-3 points apart from random variation in attention, fatigue, and question mix.

Don't react to a single test. If your scores have been 162, 164, 163, 165 and you drop to 160, it's probably noise. Three consecutive drops is a pattern worth investigating.

Look for trends across 3-5 tests. That's the minimum sample where patterns become meaningful. Your accuracy on Flaw questions being 60% on one test doesn't tell you much. Being 55-65% across five tests tells you a lot.

Compare difficulty levels, not just totals. If your total dropped but you got more hard questions right, you probably had an unlucky distribution of easy questions. The underlying improvement is real even though the score went down.

For a deeper look at what score fluctuations mean for your test day performance, see our guide to LSAT® score prediction.

When to check your trends: Review your dashboard every 3–4 tests, not after every single one. Checking your accuracy rates after every PT feeds anxiety, not insight. Let the data accumulate enough to show real patterns before you adjust your study plan.

When Your LSAT® Score Has Plateaued — And What to Do

A plateau is real when your scores have been within a 2-3 point range for five or more tests and your question type accuracy rates have also flattened. If your total is flat but LR accuracy is improving while RC is declining, you haven't plateaued — you have a shifting problem that's masking your progress.

Common causes of genuine plateaus:

Not reviewing properly. More tests without thorough review doesn't build skills. If you're not doing deep wrong answer analysis after each PT, start there — use a structured template to organize your review. Our guide to reviewing practice tests effectively covers a structured three-pass method.

Drilling strengths instead of weaknesses. Practicing question types you're already good at feels productive but isn't. Weight your study time toward your worst question types — that's where the available points are.

Ignoring easy misses. The 1- and 2-star questions you're missing are free points — questions within your ability that you're losing to carelessness or rushing. Fixing these is usually faster and more impactful than grinding on 4-star questions.

Same method, same results. If your accuracy on specific question types hasn't budged after weeks of the same approach, the method isn't working for those types. Try a different prep book's explanation, untimed deep-dive practice, or work with a tutor on that specific skill.

Tracking Tools

Several platforms offer some form of score tracking. 7Sage's analytics dashboard tracks accuracy by question type if you're using their curriculum. LSAT Demon surfaces trend data within its platform. For dedicated tracking across any prep source, you have a few options.

You can track all of this in a spreadsheet. The challenge is manually categorizing every wrong answer by question type, difficulty, and section across every test, then building your own charts. It works, but the overhead discourages most people from keeping it up.

ScoreGap does this automatically. It captures question-level data from LawHub, categorizes everything by question type in whichever framework you prefer, and tracks accuracy trends across tests. The Review Panel opens right alongside your score report for in-context journaling, and your dashboard shows at a glance which types are improving, which are stuck, and where the biggest gains are — alongside your wrong answer journal entries for each question.

However you track it, the principle is the same: stop watching your total score and start watching the metrics underneath it. That's where the actionable information lives.

The students who improve fastest aren't the ones who take the most practice tests — they're the ones who know exactly where their points are hiding. Whatever tool you use, track the metrics that actually matter.